The internet and web emerged from the vision of an improbable group of hackers, and not the work of tech companies or government programs. They hoped to build a system of free global communication.

Transmission protocols, browsers that could read html code, and Apache server software were created and distributed at no cost to users. With a free structure, the hackers hoped content could also be free.

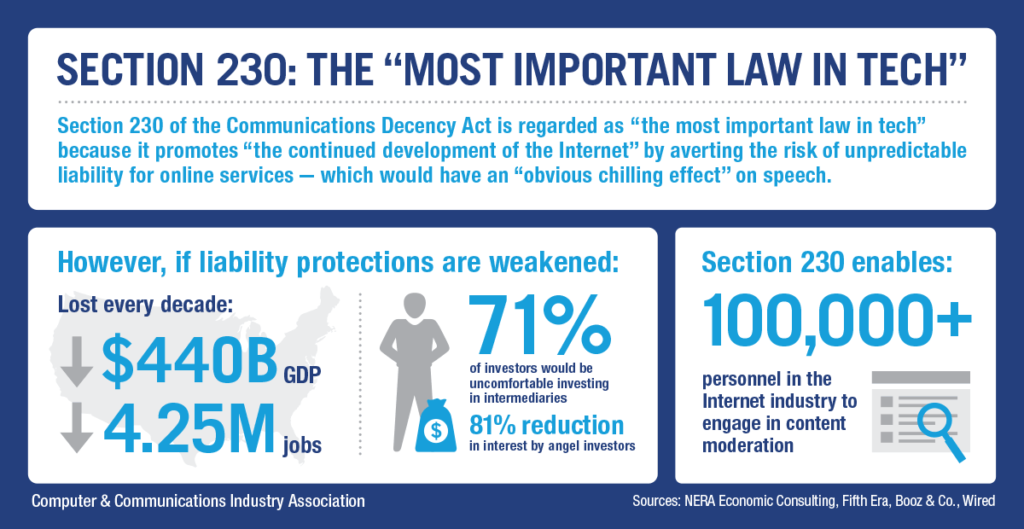

That spirit of freedom is reflected in the preamble of Section 230 of the Telecommunications law (US Code Title 47) which says the internet is “an extraordinary advance in the availability of educational and informational resources to our citizens.”

Sometimes called the “Magna Carta of the Internet,” Section 230 is the legal foundation of the world’s largest communications system. To understand why the foundation of that system is now controversial, it helps to understand its origin.

Regulating the emerging internet 1980s – 90s

In the late 1980s and early 1990s, when the internet was brand new, the average computer user would access text-only services with primitive modems through dial-up “Internet Service Providers” (ISPs). These ISPs were no more responsible for the content of internet communications than a telephone company would be for the content of a regular voice phone call.

Often they would distribute media services that published the content, but just as often they would create those services and act as publishers.

Traditional media — newspapers, magazines, radio, and television — have always been responsible for all content published and distributed. Responsibility for an advertisement published in the New York Times in 1960 was the issue in NY Times v Sullivan.

But the unusual legal form of the new digital media soon became clear with two libel cases from the early 1990s: Cubby v Compuserv (1991) and Stratton Oakmont v Prodigy (1995) If an ISP or a content provider took any steps to edit or block some content, they were considered to be responsible for ALL of that content. If the ISP acted as a distributor and took a “hands off” approach, without any editing whatsoever, they were NOT considered responsible for any content. And so, to be safe, the ISPs did nothing at all.

This lack of responsibility led to increasing concern over the new medium. One of the most alarming things was that pornographic videos were easily available to children, and a kind of moral panic took place, as seen in this July 3, 1995 Time Magazine cover.

This lack of responsibility led to increasing concern over the new medium. One of the most alarming things was that pornographic videos were easily available to children, and a kind of moral panic took place, as seen in this July 3, 1995 Time Magazine cover.

Congress reacted by passing the 1996 “Communications Decency Act” to regulate pornographic and indecent material on the internet. It became law but was immediately challenged as overly broad by the ACLU, and the law was defended by then-Attorney General Janet Reno. In Reno v ACLU, the US Supreme Court upheld part of the law and struck down other parts. The part that survived — Section 230 of the CDA — was called the “good samaritan” provision because it protected private blocking and screening of offensive material.

And so, after Reno v ACLU, if obscene or libelous or illegal materials were placed on a site by someone who didn’t work for the site (a third party), that company could remove those materials without risk of being sued. In other words, the law was meant to protect the social media company from lawsuits over third-party content. That’s why it was a “good samaritan” law, because it protected people who were trying to do the right thing. It was the third party, the one placing the materials online, who was supposed to bear the blame, take down offensive or libelous or illegal materials, and/or pay the damages.

(1) Treatment of publisher or speaker — No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.

(2) Civil liability — No provider or user of an interactive computer service shall be held liable on account of-

(A) any action voluntarily taken in good faith to restrict access to or availability of material that the provider or user considers to be obscene, lewd, lascivious, filthy, excessively violent, harassing, or otherwise objectionable, whether or not such material is constitutionally protected; or

(B) any action taken to enable or make available to information content providers or others the technical means to restrict access to material described in paragraph 1.

Zeran v AOL: First internet libel test case:

The first major test case, Zeran v AOL, 1997, held that the internet service provider AOL was not responsible for harms caused by false and malicious attacks on Kenneth Zeran through an AOL opinion chat.

The libelous messages occurred a week after the April 19, 1995 bombing of the federal building in Oklahoma City that killed 168 people. An anonymous post advised people to “Visit Oklahoma — It’s a BLAST!” and to contact Zeran for t-shirts. Zeran had nothing to do with the messages, and asked AOL to take them down.

AOL took down the offending posts but declined to post retractions. The messages kept coming and soon drew widespread media attention, which harmed Zeran’s reputation and ability to work.

The US Fourth District Court said that AOL was not liable, and that Section 230 of the CDA “preempts a negligence cause of action against an interactive computer service provider arising from that provider’s distribution of allegedly defamatory material provided via its electronic bulletin board.”

However, as the Internet became increasingly popular, the issue of moderation became a major problem

Some obviously harmful content (for example libel, private facts, incitement to violence) did not have to be edited or controlled in any way. Many companies believed that trying to police the enormous flood of content coming through the Internet and the World Wide Web would be too expensive and therefore would hold back the development of promising new digital media.

Section 230 and media responsibility after Zeran

Section 230 insulates ISPs and Social media like Facebook, Twitter, YouTube, TwitchTV, etc. from responsibility for violations of law in user-generated (third party) posts. The author of a Facebook post or the producer of a YouTube video is still responsible for violations of the law, but not the platforms like Facebook or YouTube.

CDA Section 230 meant that ISPs and other platforms would be free to take down, edit, or re-prioritize third party posts, but that’s not the way it has worked out. Even when a video or a post is a clear violation of the ISPs own terms of service, under Section 230 it is under no obligation to take down objectionable material.

There are several minor exceptions: Fair housing laws and copyright laws. But these are relatively small issues compared to some of the vicious and outrageous third party posts in other areas.

Most of these individual users can’t afford to be defendants in any kind of litigation. By the same token, a plaintiff will rarely recover legal costs, and so low-income intransigent libel defendants tend to face injunctions and criminal contempt charges for strongly held but inaccurate views.

And so a side effect of democratizing digital media is that everday bar-room ranting can easily be transformed into criminal contempt.

The controversy over Section 230 and social media

Criticism of blanket indemnification of social media companies includes:

-

Harm from algorithmic design through harmful content or discriminatory outcomes.

-

Profit over safety and well-being of users.

-

Spread of misinformation and hate speech, for example, against Rohingya people of Myanmar in 2022.

-

Lack of transparency, including refusals to allow external researchers like Joan Donovan access to information.

Defending Section 230

In defense of Section 230, the Electronic Frontier Foundation says: “Section 230 is an essential legal pillar for online expression. It makes only the speaker themself liable for their speech, rather than the intermediaries through which that speech reaches its audiences. This makes it possible for sites and services that host user-generated content to exist, and allows users to share their ideas without first having to create their own individual sites or services. This gives many more people access to the content that others create, and it’s why we have flourishing online communities for many niche groups, including sports teams, hobbyists, or support groups, where users can interact with one another without waiting hours or days for a moderator or an algorithm to review every post.”

There are many other defenders of Section 230 including Anupom Chander, who wrote in Yale Law Review article:

The broad exercise of [free speech] by groups often omitted from the dominant cultural and news platforms available, [has] permitt[ed] platforms to moderate speech that targets certain groups. For better or worse, Section 230 helped build the global internet we know today. Any efforts to restrict Section 230 must grapple with the implications of such restrictions for global speech.

Critics point of view

-

Critics argue that the companies that distribute information — Google (Alphabet), Apple, Microsoft, Amazon, and Meta (Facebook), among others — are now by far the world’s largest corporations and can certainly afford to moderate harmful content.

-

And in fact, industry observers estimated in 2022 that 10,000 content moderators worked for TikTok; 15,000 for Facebook and 1,500 for Twitter as of 2022. However, in 2025, Facebook and Twitter reacted to criticism and announced they would stop most content moderation and replace it with user-generated “community notes.” And so, to be safe, social media did nothing at all.

- Now that the companies originally protected by Section 230 are corporate giants, they are far more capable of taking responsibility for what it published on their sites by third parties. This is the theory behind the European Union’s Digital Services Act (DSA), Digital Markets Act (DMA), and AI regulation.

Whistleblowers: The Facebook papers

Adding fuel to the fire, allegations by Facebook whistleblower Frances Haugen in October 5 2021 testimony before US Senate sub-committee on Consumer Protection, Product Safety, and Data Security.

Haugen said that the social network’s algorithms promote angry content to keep people engaged on the platform. Facebook knew that its policies and algorithms were stoking violence and hatred, but also knew that this increased user “engagement” and therefore profits. They chose money over safety, she alleged.

Haugen said:

“The company’s leadership knows how to make Facebook and Instagram safer, but won’t make the necessary changes because they have put their astronomical profits before people. Congressional action is needed. They won’t solve this crisis without your help.”

Haugen also blamed Facebook for fanning genocide and ethnic violence in Myanmar and Ethiopia.

This is only one set of many allegations against Facebook and social media in general. Many others have followed Haugen in subsequent years: Sophie Zhang revealed evidence of foreign political manipulation and other harms on the platform; Cayce Savage and Jason Sattizahn testified that Meta was aware of how its products harmed children and deliberately suppressed this information.

Ideas about Reforming Section 230

There are three basic positions on Section 230 regulation: Too much (conservative), not enough (liberal) and don’t even touch it (libertarian).

- On the “too much” side, many in the Trump wing of the Republican party believe that the big social media companies have no business correcting them, even if what they say may be false, harmful or defamatory from all but the most partisan perspectives. Donald Trump hated the law and has tried repeatedly to get rid of it, arguing in 2020 that Section 230 facilitated the spread of foreign disinformation and was a threat to national security and election integrity.

Ultra-conservative Justice Clarence Thomas has proposed using “common carrier” and “public accommodation” analogies to structure even further deregulation of the internet and web, but these analogies have been faulted as inappropriate given the neutrality expected of a public carrier, which cannot be neutral if social media companies use algorithms to individualize content. This was affirmed by the US. Supreme Court in Moody v Netchoice in 2024.

2. Democrats and some center-right Republicans argue that Section 230 has gone too far, and that social media should at least be required to take down harmful content that is violent, defamatory or invasive. In September, 2022, President Joe Biden announced support for reform efforts.

3. On the libertarian side, free speech advocates like the Electronic Frontier Foundation are adamant about the value of Section 230, while also acknowledging that the tech giants don’t do enough to combat hate speech and disinformation online. This reflects the position of the tech giants themselves, which have been fighting to hold back any and all regulation.

3. On the libertarian side, free speech advocates like the Electronic Frontier Foundation are adamant about the value of Section 230, while also acknowledging that the tech giants don’t do enough to combat hate speech and disinformation online. This reflects the position of the tech giants themselves, which have been fighting to hold back any and all regulation.

Could the courts treat a platform’s third party content responsibilities separately from its own internal design, algorithms, and business practices? Yes, but in a number of cases, courts have found that Section 230 shielded platforms like Meta and YouTube from liability alleging that its algorithm recommended radicalizing content.

In Gonzalez v. Google (2023), for example, the Supreme Court said that YouTube / Google was not liable for radicalizing content but left the door open to further rulings. The case involved liability for the 2015 death of American student Nohemi Gonzalez who was killed in an ISIS terrorist attack in Paris.

FURTHER READING

Section 230: A Brief Overview, Feb. 2024, Congressional Research Service.